Greyhound Racing Results: How to Use Past Data

Best Greyhound Betting Sites – Bet on Greyhounds in 2026

Loading...

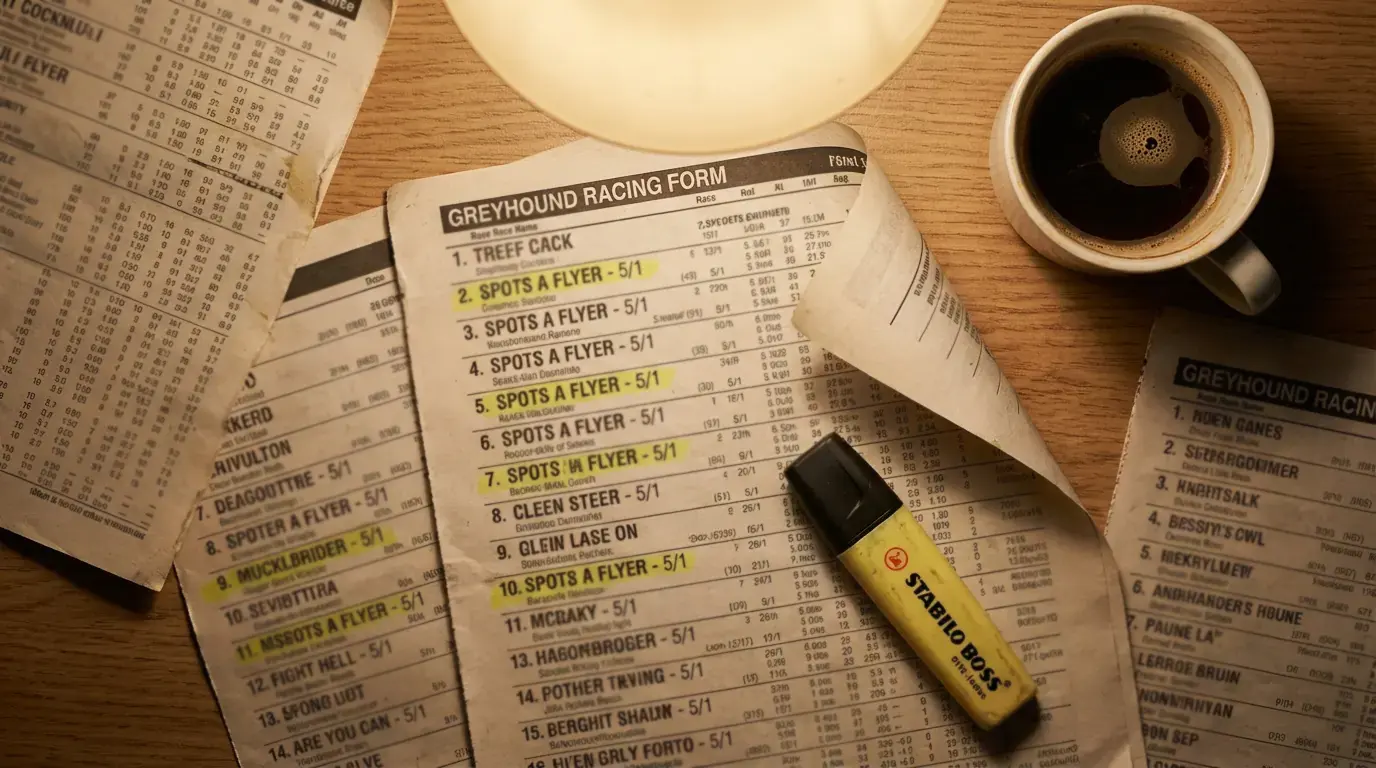

Where to Find UK Greyhound Racing Results

Results archives are the raw material of informed greyhound betting, and knowing where to find them is the first step towards using them properly. The good news is that UK greyhound racing is well-documented. Every race at every licensed GBGB track is recorded with full finishing positions, times, sectional splits, race comments, Starting Prices, and going reports. The data exists. The question is where to access it and how much of it you need.

The Racing Post is the most widely used source of greyhound results in the UK. Its online results service covers every GBGB-licensed meeting, with archives stretching back several years. Each result entry includes the full finishing order, race time, sectional time to the first bend, individual dog comments describing the run, trap draws, Starting Prices, and forecast and tricast dividends. For most punters, the Racing Post’s results archive provides everything needed for form analysis without requiring a paid subscription for basic access.

Greyhound Board of Great Britain publishes results through its own channels, and these carry an added layer of authority as the governing body’s official record. GBGB results confirm grading, track conditions, and regulatory details that may not appear in third-party coverage. For verification purposes — particularly when cross-referencing a dog’s grade history or checking whether a race was run under standard conditions — GBGB data serves as the definitive source.

Individual track websites often publish their own results, sometimes within minutes of a race finishing. These are useful for punters who focus on a single circuit, as the presentation is often tailored to that track’s specific distances and grading structure. Some track sites also publish running-order data — showing which dogs led at the first bend and at the third bend — which gives a clearer picture of how the race unfolded than the finishing order alone.

Specialist data services provide a deeper layer. Platforms such as Timeform offer greyhound results with added analytical context: speed ratings, performance ratings, and adjusted times that account for track conditions and going. These services typically require a subscription, but for punters who bet frequently and want standardised data across different tracks, the investment in a form database can pay for itself through improved selection quality. The value of a rated performance figure is that it allows you to compare dogs across different tracks and distances on a common scale — something raw times alone cannot do.

One source worth knowing about is Betfair’s greyhound results section, which includes exchange prices alongside the SP. This is useful for any punter interested in how the exchange market priced a dog compared to the traditional bookmaker market, and over time it reveals patterns in where the exchange was more or less accurate than the SP in reflecting true probabilities.

Turning Results Into Actionable Patterns

A result is a fact. A pattern is an edge — but only if the sample size is large enough to distinguish signal from noise. The difference between a punter who reads results and one who profits from them lies in how the data is filtered, aggregated, and applied.

The first level of pattern recognition is individual dog form. Reading a dog’s last six results — the standard form line on most racecards — tells you how it has been performing recently. But the form line alone is a compressed summary. To extract actionable information, you need to read behind the numbers. A dog that has finished third in its last three races might look moderate on paper. If those three thirds were all in A2 races from poor draws with first-bend interference noted in the comments, the dog’s underlying ability is considerably better than the raw finishing positions suggest. Conversely, a dog that has won two of its last three might look sharp — until you notice that both wins came in A8 company on nights with multiple non-runners reducing the field quality.

The second level is track-specific patterns. Over a sufficiently large sample, results at a given track reveal structural tendencies: which traps win most often at each distance, how early-pace dogs perform compared to closers, whether the rail produces a measurable advantage on the bends, and how race times vary between meetings held at different times of day or under different conditions. These patterns are real and persistent, because they reflect the physical properties of the track — its geometry, surface, and configuration — which do not change between meetings. A pattern based on 500 races at a specific track over a specific distance is reliable. A pattern based on 30 races over three weeks is likely to be noise.

The third level is trainer and kennel patterns. Some trainers bring dogs to peak form on specific schedules. Some kennels perform notably better or worse at particular tracks, often because of proximity — dogs that travel shorter distances to a meeting may arrive fresher. Trainer strike rates at individual tracks, filtered by grade, can reveal consistent edges that the general market does not fully price in. A trainer with a 25% win rate at a specific circuit, against a track average of 17%, represents a measurable advantage — provided the sample covers at least a full racing season.

The discipline in all pattern recognition is the same: demand a sufficient sample before acting. In greyhound racing, where each track runs multiple meetings per week and each meeting contains ten to twelve races, useful data accumulates quickly. A month of racing at a single track can produce over 400 individual results — enough to begin distinguishing structural tendencies from random variation.

Historical Results Pitfalls: Small Samples and Changing Conditions

Last month’s data at a track that was resurfaced last week is not data — it is noise. This is the most common trap in greyhound results analysis, and it catches experienced punters as readily as newcomers. Historical results are only useful if the conditions under which they were produced still apply. When conditions change, the historical record becomes unreliable, and any patterns derived from it need to be reassessed from scratch.

Track resurfacing is the most dramatic condition change. When a greyhound track relays or regrades its sand surface, the running characteristics can shift significantly. A track that previously favoured inside runners may become more neutral. A fast surface may become slower, or vice versa. After a resurface, every time comparison between pre- and post-surface form becomes invalid. Dogs whose form was based on the old surface need to be reassessed on the new one, and trap bias data compiled before the change should be discarded entirely. It takes several weeks of racing on a new surface before reliable patterns begin to emerge.

Seasonal variation is subtler but equally important. UK greyhound tracks are outdoor facilities, and track conditions change with the weather. Sand surfaces run differently in wet and dry conditions, in heat and cold. Winter meetings at exposed tracks may produce slower times than summer meetings at the same circuit, not because the dogs are slower but because the surface is heavier. A dog whose form figures were compiled in July should not be directly compared with one whose form was compiled in December without adjusting for the seasonal difference in track speed. Some data services provide track-speed ratings that account for this variation. Without such adjustments, raw time comparisons across seasons are misleading.

The small-sample problem is the most insidious of all. Human brains are wired to see patterns, and a punter studying results will find them whether they exist or not. If Trap 4 has won four of the last ten races at a particular distance, it is tempting to conclude that Trap 4 has an edge. But four wins from ten is well within the range of normal variation for a trap that has no structural advantage at all. At a 16.7% expected win rate, a trap might win anywhere from zero to five times in ten races without any genuine bias being present. The threshold for concluding that a trap bias is real — rather than a random fluctuation — requires hundreds of races, not dozens.

The practical rule is conservative: treat any pattern derived from fewer than 100 races as suggestive rather than conclusive, and any pattern from fewer than 50 races as anecdotal. Cross-reference findings across different time periods. If a pattern holds across six months, it is likely structural. If it appears in one month but not the next, it was probably noise. Results data is powerful, but only when handled with the respect for sample size and changing conditions that any statistical exercise demands.